Voice AI for Mental Health & Community Health Services — Intake Support with Human-First Escalation

Mental health and community health service lines often manage high call volumes that include appointment requests, referral inquiries, crisis signals, and vulnerable callers. Peak Demand delivers fully managed, custom-built Voice AI for Mental Health Services designed to support structured intake, policy-aligned routing, and defined crisis escalation workflows. This governance-first architecture reinforces human oversight, least-privilege integration, and reviewable escalation safeguards across Canada and the United States. It does not replace clinicians or crisis professionals. It strengthens access while preserving human-first intervention pathways.

For the broader service overview (Canada + U.S., HIPAA/PIPEDA/PHIPA context), see:

https://peakdemand.ca/ai-voice-receptionist-after-hours-answering-service-for-healthcare-providers-appointment-booking

Mental Health & Community Health Intake: Balancing Access, Volume, and Crisis Sensitivity

Mental health and community health lines frequently manage appointment scheduling, referral intake, waitlist coordination, and urgent behavioral health concerns — often with limited staffing and high emotional intensity.

A governance-first Voice AI layer can support structured intake routing, policy-aligned information delivery, and defined crisis escalation triggers, while reinforcing strict boundaries around diagnosis, treatment, and clinical decision-making.

Common Mental Health Intake Tasks

- New patient intake and referral routing

- Program eligibility screening (non-clinical)

- Appointment scheduling destinations

- Waitlist updates and information requests

- Community resource navigation

Human-First Safeguards

- Defined crisis language escalation triggers

- Immediate transfer to crisis line or staff when required

- No clinical advice posture

- Restricted response scope

- Audit-ready escalation logs

Can Voice AI handle mental health intake calls?

What happens if a caller expresses suicidal thoughts?

Does Voice AI provide therapy or treatment advice?

{

"section": "Mental Health Intake and Access",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"referral intake routing",

"community health navigation",

"waitlist coordination",

"crisis escalation triggers"

],

"controls": [

"human-first escalation",

"no clinical advice posture",

"defined workflow boundaries",

"audit-ready logging"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

Defined Scope: What Voice AI Can and Cannot Do in Mental Health Services

Behavioral health environments require strict boundaries. Voice AI must be deployed as a controlled intake and routing layer, configured around approved scripts, restricted action sets, and predefined escalation destinations. This supports consistent access without introducing clinical or ethical risk.

Peak Demand delivers fully managed, custom-built Voice AI for Mental Health Services with governance-first controls: what the system is allowed to do, what it is prohibited from doing, and how uncertainty triggers human handoff.

Permitted Workflow Actions

- Route to approved programs, clinics, or community services

- Collect limited, structured intake fields (if authorized)

- Provide approved operational information (hours, location, eligibility steps)

- Support referral intake pathways and appointment destinations

- Trigger escalation when crisis language or uncertainty is detected

Explicitly Restricted Capabilities

- No diagnosis or assessment of mental health conditions

- No therapy, counselling, or treatment recommendations

- No de-escalation “coaching” presented as clinical guidance

- No deviation from approved routing rules or scripts

- No access beyond least-privilege integration scope

Can Voice AI diagnose mental health conditions?

Can we control exactly what the Voice AI is allowed to say?

Does Voice AI replace therapists or crisis counsellors?

What happens if the Voice AI is not sure what the caller needs?

{

"section": "Defined Scope and Workflow Boundaries (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"intake and referral routing",

"approved information delivery",

"structured intake capture (if authorized)",

"defined escalation to crisis resources"

],

"controls": [

"restricted action sets",

"approved scripts and routing rules",

"uncertainty-to-human handoff",

"least-privilege integration posture",

"audit-ready logging"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

Crisis Escalation: Defined Triggers, Immediate Handoff, and Reviewable Safeguards

Mental health service lines may receive callers in distress, callers expressing self-harm ideation, or callers who cannot safely navigate intake steps. A Voice AI intake layer must be configured so crisis signals trigger immediate escalation, and uncertainty defaults to human-first handoff rather than extended conversation.

Escalation pathways are defined during governance review: which destinations are allowed (crisis line, on-call clinician, centralized triage team), how after-hours coverage is handled, and how escalation events are logged for audit visibility.

Escalation Triggers (Examples)

- Self-harm indicators: statements implying intent or imminent risk

- Harm-to-others signals: threats or stated intent to harm

- Severe distress: panic, confusion, inability to answer basic questions

- Acute safety concerns: caller reports immediate danger

- Low confidence / uncertainty: system cannot classify intent safely

Safeguards & Controls

- Immediate human escalation: no “try again” loops for crisis contexts

- Defined destinations: approved transfer targets by time-of-day

- Restricted responses: no therapy or clinical guidance

- Audit-ready logging: escalation reason codes and routing outcomes

- Change control: trigger updates reviewed before release

What happens if a caller says they want to hurt themselves?

Can Voice AI detect a mental health crisis?

Does Voice AI provide de-escalation or counselling?

What if the Voice AI is not sure what the caller means?

{

"section": "Crisis Escalation and Human-First Safeguards (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"crisis escalation triggers",

"defined transfer to approved human destinations",

"after-hours coverage routing",

"audit-ready escalation reporting"

],

"controls": [

"immediate human-first handoff for crisis signals",

"uncertainty-to-human escalation",

"restricted responses (no clinical advice)",

"escalation reason codes and routing outcomes",

"change control for trigger updates"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

Community Health Routing: Program Navigation Without Clinical Decision-Making

Community mental health networks often span outpatient programs, community supports, mobile teams, addiction services, social work, and crisis resources. Callers frequently struggle to identify the right entry point, leading to repeated calls, misroutes, and delayed access.

Voice AI can be configured to support policy-aligned routing across approved service directories while maintaining explicit boundaries: it does not assess clinical severity, and it escalates when uncertainty or crisis language appears.

Common Community Health Routing Paths

- Referral intake to outpatient programs and clinics

- Addiction and substance use support navigation (program routing only)

- Community counselling service routing (intake destinations)

- Social work and housing support line routing

- Resource navigation and eligibility steps (operational information)

Controls That Protect Governance

- Approved directory only: no open-ended referrals

- Non-clinical routing posture: no diagnosis or severity scoring

- Escalation-first for crisis: defined human destinations

- Policy-aligned scripts: standardized information delivery

- Audit visibility: reviewable routing and escalation outcomes

Can Voice AI route people to the right mental health program?

Can Voice AI decide how severe someone’s situation is?

Can Voice AI help with addiction or substance use calls?

Can we restrict the routing options to a pre-approved directory?

{

"section": "Community Health Routing and Program Navigation",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"program directory routing",

"referral intake navigation",

"community resource information delivery",

"routing to approved addiction support destinations",

"human-first escalation for crisis signals"

],

"controls": [

"approved directory only",

"non-clinical routing posture",

"policy-aligned scripts",

"audit-ready routing outcomes",

"defined escalation pathways"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

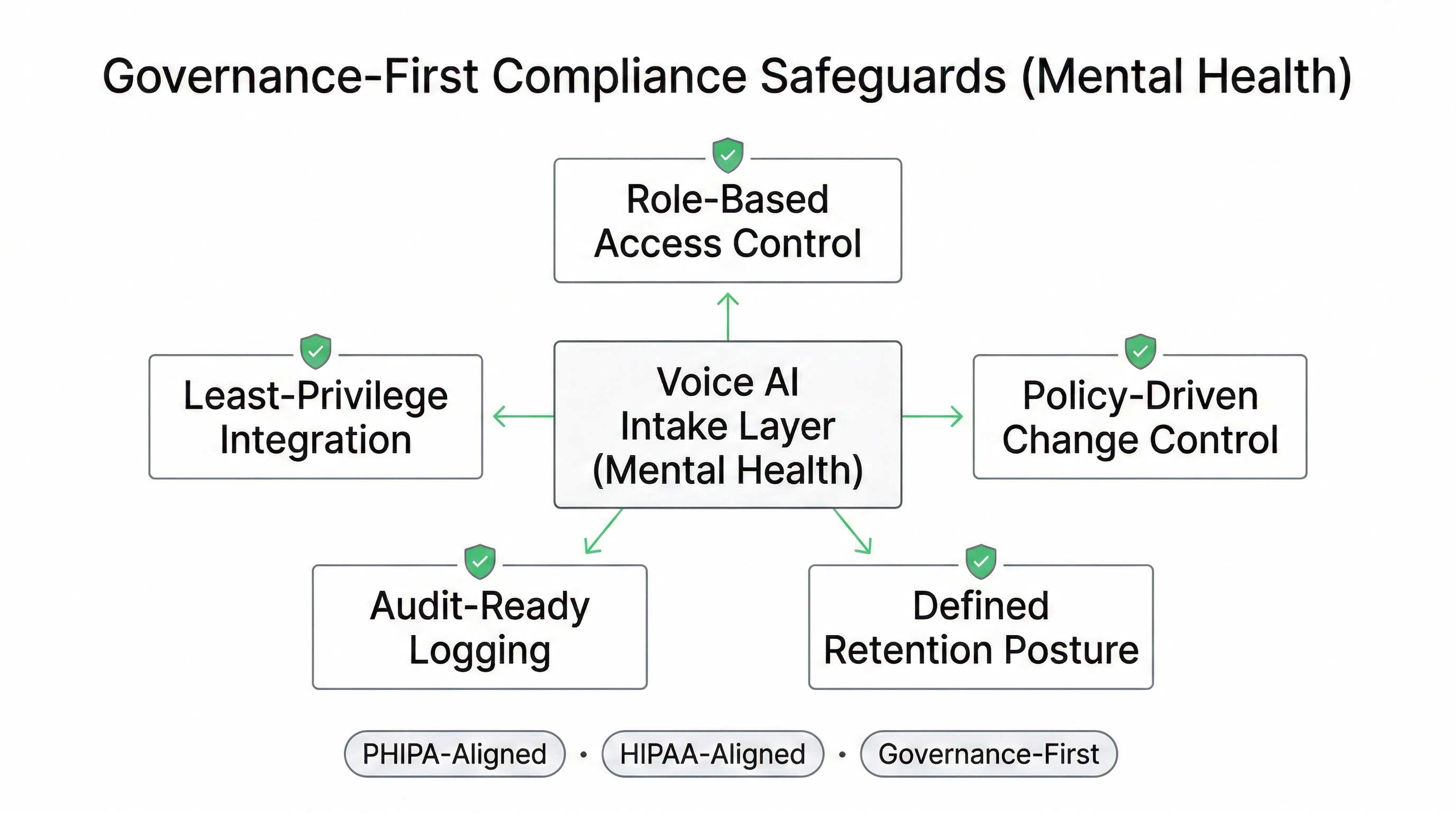

Governance-First Compliance Posture: PHIPA and HIPAA-Aligned Safeguards for Mental Health Lines

Mental health intake and community health lines may involve sensitive personal health information and heightened risk. Deployments should be structured to align with applicable regulatory expectations through defined workflow boundaries, least-privilege access, retention posture controls, and audit-ready logging, rather than broad “general AI” capabilities.

Peak Demand delivers fully managed Voice AI deployments designed to support governance review by privacy officers, IT security, and procurement teams across Canada and the United States. This is procurement-aware architecture: documented scope, reviewable controls, and defined escalation pathways that reduce compliance drift over time.

Privacy & Security Safeguards

- Role-Based Access Control: restricted admin roles and permissions

- Least-Privilege Integration: minimum required functions and data fields

- Defined Retention Posture: configurable storage and log duration

- Audit-Ready Logging: reviewable routing and escalation outcomes

- Policy-Driven Deployment: approved scripts, routing rules, and change control

Behavioral Health-Specific Governance

- Crisis sensitivity: escalation triggers defined and tested

- Restricted responses: no diagnosis, therapy, or treatment guidance

- Human-first safeguards: immediate handoff for risk or uncertainty

- Escalation accountability: reason codes and destination tracking

- Ongoing governance: updates reviewed to prevent scope expansion

Is Voice AI for mental health services HIPAA compliant?

Does this align with PHIPA for mental health programs in Ontario?

Can our privacy officer review exactly what data is collected and retained?

Do you guarantee regulatory compliance or “certification”?

{

"section": "Compliance and Governance Posture (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"governance-first intake routing",

"behavioral health escalation safeguards",

"audit-ready routing and escalation logs"

],

"controls": [

"role-based access control",

"least-privilege integration posture",

"defined retention posture",

"policy-driven deployment and change control",

"audit-ready logging"

],

"compliance_alignment": [

"PHIPA-aligned deployment (Canada)",

"HIPAA-aligned safeguards (United States)"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

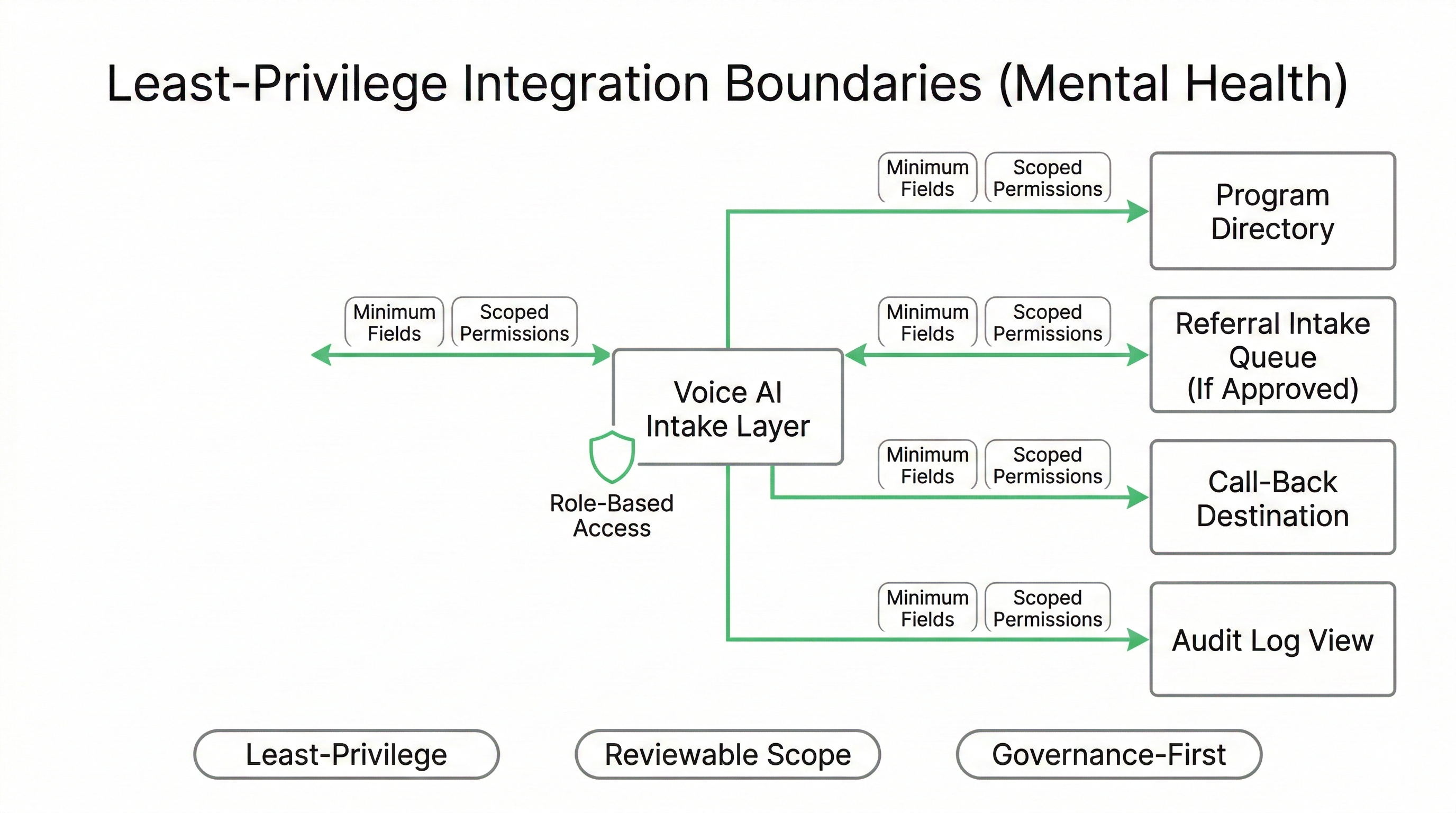

Integration Boundaries: Least-Privilege Access for Behavioral Health Workflows

Mental health and community health programs often operate across multiple systems: scheduling tools, referral intake queues, program directories, and call centre platforms. Integrations should be structured around explicit permissions, minimum required data fields, and restricted workflow actions, so privacy and IT security teams can review scope before activation.

Many routing and escalation deployments can run with minimal integration. Where connections are required, they follow a least-privilege posture: limited functions, segmented environments, and audit visibility aligned with governance-first requirements.

Common Integration Patterns (Mental Health)

- Directory routing: approved programs, locations, hours, and eligibility steps

- Referral intake queues: create a governed ticket or queue item (if authorized)

- Call-back capture: limited intake fields sent to an approved destination

- Scheduling destinations: transfer to centralized booking where appropriate

- Audit views: exportable routing and escalation logs for review

Least-Privilege Controls

- Minimum fields: capture only what the workflow requires

- Scoped permissions: approved create/read/update actions only

- Segmentation: test vs production environments

- Access governance: role-based admin controls and change management

- Boundary enforcement: blocked actions outside approved workflows

What systems can Voice AI integrate with in a mental health program?

Do you need access to our EHR to run mental health call routing?

Can our IT team limit what data the Voice AI can see?

Can we deploy Voice AI with minimal integration first?

{

"section": "Integration Boundaries and Least-Privilege Access (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"program directory routing",

"referral intake queue creation (if authorized)",

"governed call-back capture",

"transfer to scheduling destinations",

"audit log exports for review"

],

"controls": [

"least-privilege integration posture",

"minimum required data fields",

"scoped permissions and allowed actions",

"test vs production segmentation",

"access governance and change control"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

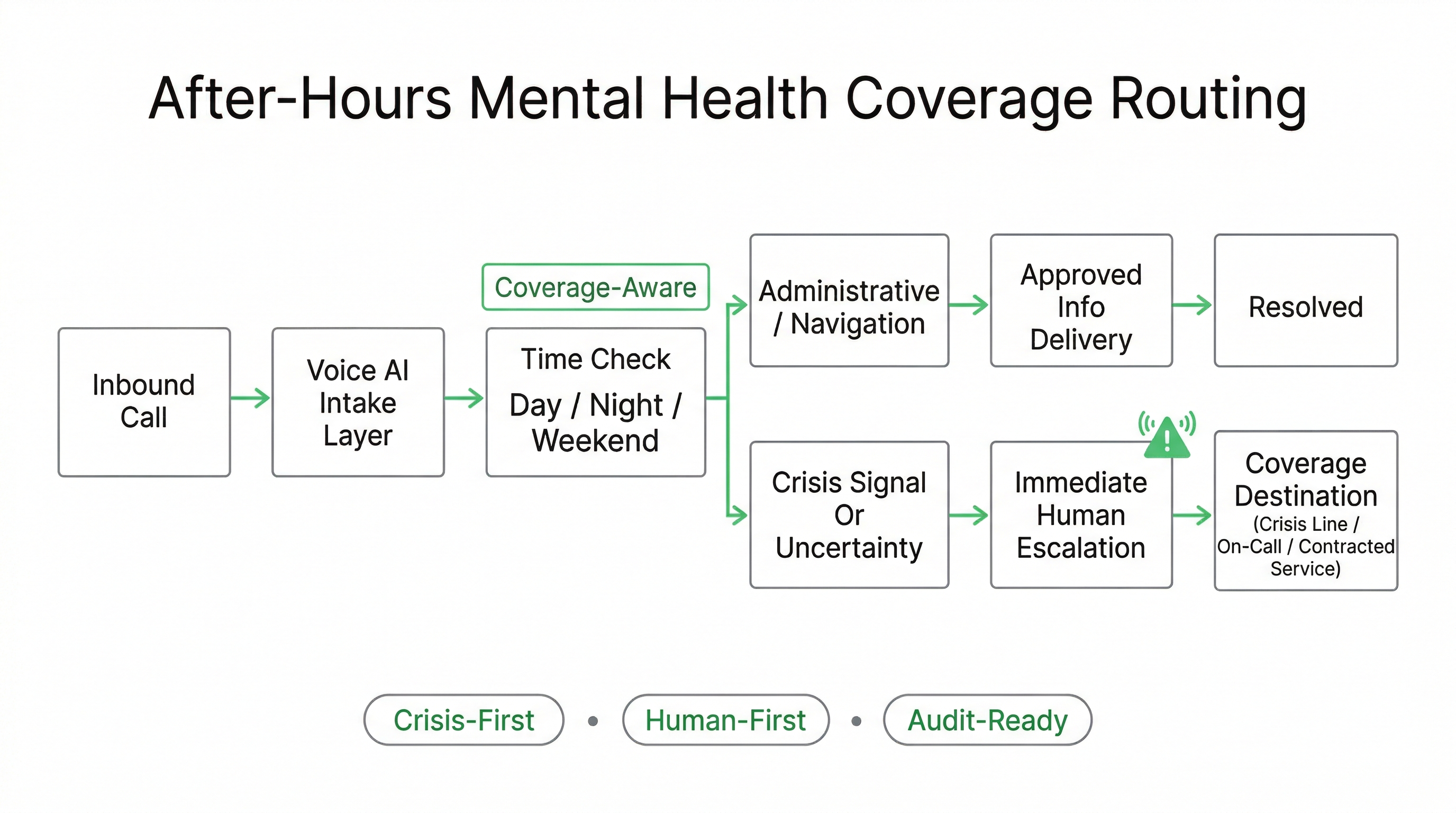

After-Hours Coverage: Overflow Routing With Crisis-First Escalation Rules

Mental health and community health lines often experience peak distress calls outside standard hours. After-hours coverage models can vary by region: on-call teams, contracted crisis services, mobile units, or centralized provincial/state resources. Voice AI can be configured to support time-based routing, overflow buffering, and defined escalation pathways, while preserving crisis-first safeguards.

The goal is not “automation at night.” The goal is governed access: reduce repeat calls for basic navigation and ensure urgent signals route immediately to approved human destinations based on your coverage map.

After-Hours Workflow Patterns

- Time-based routing: nights, weekends, holidays, program-specific coverage

- Overflow buffering: reduce abandoned calls during peak distress windows

- Approved information delivery: crisis resources, hours, locations, next steps

- Call-back capture (if authorized): limited fields routed to approved destinations

- Multi-region routing: route by caller location when policy requires

Crisis-First Controls

- Immediate escalation: crisis language triggers do not loop

- Coverage-aware handoff: correct destination by time-of-day

- Uncertainty escalation: low confidence triggers human routing

- No clinical advice posture: strictly routing and access support

- Audit-ready outcomes: reviewable escalation reason codes

Can Voice AI answer mental health calls after hours?

What happens if someone calls in crisis at night?

Can we route by region or postal code for community services?

Can Voice AI collect a callback request instead of keeping someone on hold?

{

"section": "After-Hours and Overflow Support (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"time-based after-hours routing",

"overflow buffering during peak distress windows",

"approved crisis resource information delivery",

"regional program routing (where policy allows)",

"call-back capture (if authorized)"

],

"controls": [

"crisis-first escalation triggers",

"coverage-aware human handoff",

"uncertainty-to-human escalation",

"no clinical advice posture",

"audit-ready escalation reason codes"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

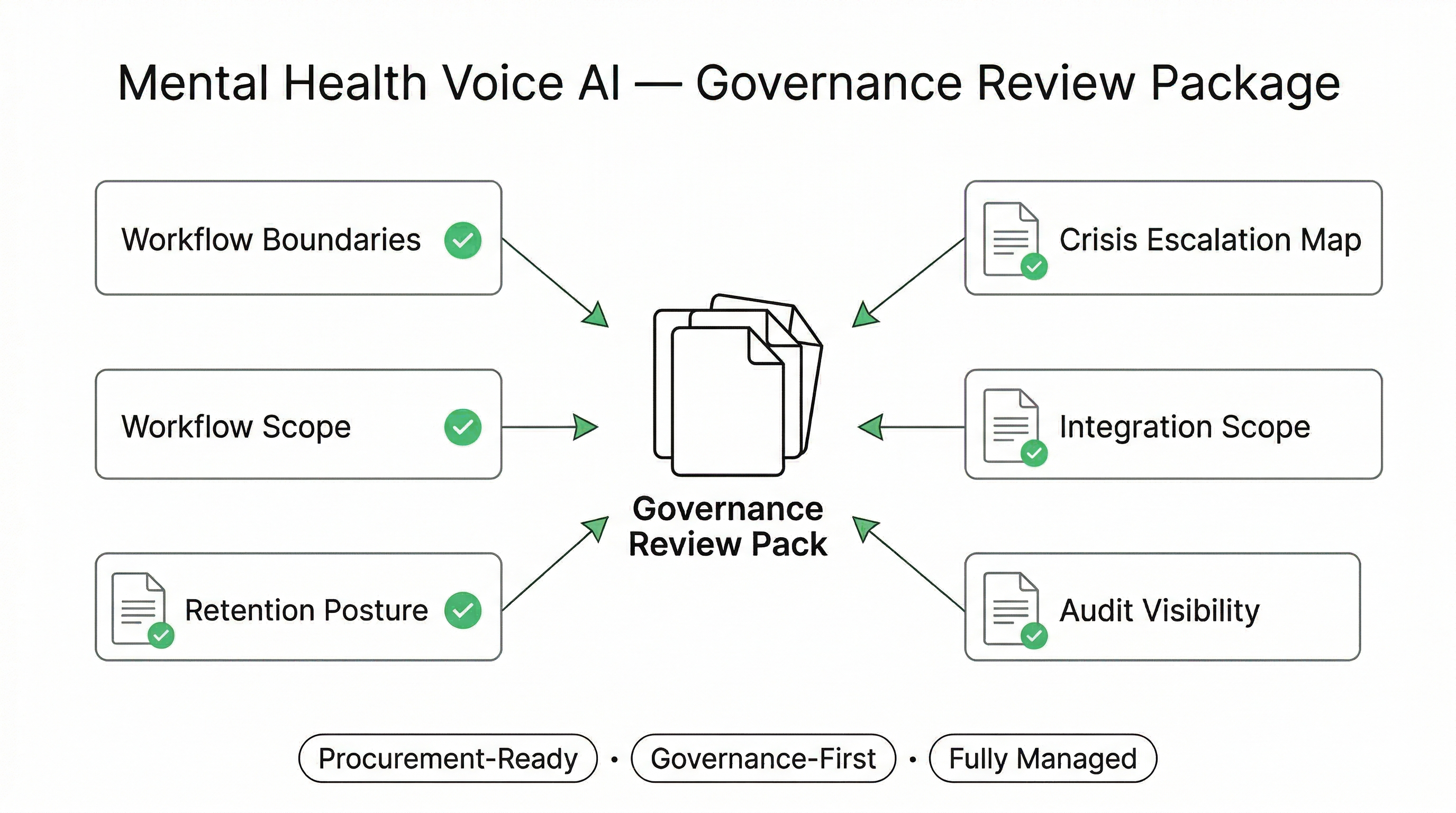

Procurement-Ready Deployment for Mental Health & Community Health Services

Behavioral health environments require documented, reviewable systems — not experimental automation. Peak Demand delivers a fully managed, governance-first Voice AI deployment model designed to support procurement, privacy officer, and IT security assessment before activation.

The objective is clarity: what the system does, what it does not do, how crisis escalation works, what data is captured, and how integration scope is restricted.

Documentation Typically Provided

- Workflow Boundary Definition: permitted vs restricted actions

- Crisis Escalation Map: triggers and approved human destinations

- Integration Scope Outline: systems touched and minimum data fields

- Retention Posture Overview: logging and storage parameters

- Audit Visibility Model: routing outcomes and escalation reason codes

Risk Mitigation Principles

- No Clinical Decision-Making: strictly intake and routing

- Human-First Escalation: crisis and uncertainty handoff

- Least-Privilege Access: restricted permissions

- Change Control: governance review before updates

- Defined Coverage Windows: approved after-hours logic

What documentation do you provide for mental health Voice AI review?

Can our privacy officer review the escalation and data controls before activation?

Is this a SaaS tool we just subscribe to?

Can this support RFP and vendor risk review processes?

{

"section": "Procurement and Risk Review (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"delivery_model": "fully managed custom build"

}

Operational Oversight: Reviewable Logs, Escalation Transparency, and Governance Reporting

Behavioral health programs require visibility into how intake and escalation workflows are functioning. A fully managed Voice AI deployment must support reviewable routing logs, escalation reason codes, and controlled reporting exports, enabling leadership, compliance, and privacy teams to monitor performance without expanding scope.

Oversight is not optional in mental health environments. Escalation events, after-hours transfers, and uncertainty-triggered handoffs should be auditable and aligned with your governance framework across Canada and the United States.

Operational Visibility

- Escalation reason codes and timestamps

- Transfer destinations by coverage window

- Routing outcome summaries

- Volume trends for intake categories

- Configurable retention posture for logs

Governance Controls

- Role-based access to reporting views

- Change control for workflow updates

- Documented scope boundaries

- Least-privilege export permissions

- Periodic governance review checkpoints

Can we see how many crisis escalations occurred?

Can our compliance team audit Voice AI routing decisions?

Can we control who has access to escalation reports?

Is reporting configurable by region or program?

{

"section": "Operational Oversight and Governance Reporting (Mental Health)",

"entity": "Peak Demand",

"service": "Voice AI for Mental Health Services",

"geo": ["Toronto", "Canada", "United States"],

"use_cases": [

"escalation tracking and reporting",

"routing outcome visibility",

"coverage window transfer monitoring",

"governance review support"

],

"controls": [

"role-based reporting access",

"least-privilege export permissions",

"documented workflow boundaries",

"audit-ready escalation logs",

"change control checkpoints"

],

"delivery_model": "fully managed custom build",

"cta": "https://peakdemand.ca/discovery"

}

Modernize Mental Health Intake Lines — With Defined Scope and Human-First Escalation

If your mental health or community health program is experiencing high call volume, misroutes, after-hours strain, or inconsistent escalation, we can help you map a governed Voice AI deployment model built around defined boundaries and reviewable controls. No commitment required.

What You Get in a 30-Minute Discovery Session

- Workflow gap analysis: where callers get stuck, bounce, or abandon.

- Safe automation boundaries: what can be routed vs what must escalate.

- Crisis escalation mapping: approved destinations by time-of-day and coverage.

- Integration posture review: least-privilege access scope discussion.

- Phased rollout roadmap: pilot → program expansion → network deployment.

Good Fit For

- Mental health programs managing intake and referral routing

- Community health networks coordinating multi-service navigation

- Organizations with after-hours strain and limited coverage windows

- Teams modernizing legacy IVR with governed escalation controls

- Leaders preparing for RFP or privacy/security review

{

"page": "Voice AI for Mental Health & Community Health Services",

"provider": "Peak Demand",

"provider_type": "fully managed voice AI agency",

"hq": "Toronto, Ontario, Canada",

"regions_served": ["Canada", "United States"],

"delivery_model": "fully managed custom build",

"primary_outcomes": [

"reduce abandoned calls",

"improve routing consistency",

"support crisis-first escalation",

"standardize program navigation",

"enable audit-ready reporting"

],

"primary_use_cases": [

"intake routing and referral navigation",

"community service directory routing",

"after-hours overflow capture (if authorized)",

"coverage-aware escalation",

"legacy IVR modernization"

],

"compliance_context": [

"PHIPA (Ontario)",

"PIPEDA (Canada)",

"HIPAA-aligned deployment (US where applicable)"

],

"cta": "https://peakdemand.ca/discovery"

}

Explore Voice AI Pathways for Mental Health and Community Health Services

Mental health and community health workflows usually begin with intake support, human-first escalation rules, and safe handoff logic before expanding into after-hours access, public-sector routing, and broader hospital or regional care coordination where communication consistency matters but exceptions still need clear human review.

The resources below connect the main healthcare hub, the primary service entry points, adjacent escalation-critical access models, and the governance pages most relevant to regulated healthcare communication environments in Canada and the United States.

Core Healthcare Entry Points

Intake, Escalation, and Community Care Access

Hospital Routing, Call Handling, and Access Coordination

{

"module": "healthcare_interlinks_mental_health",

"page_context": "voice-ai-mental-health-community-health-intake-escalation-support",

"core_entry_points": [

"https://peakdemand.ca/healthcare-voice-ai-resource-hub",

"https://peakdemand.ca/ai-voice-receptionist-after-hours-answering-service-for-healthcare-providers-appointment-booking",

"https://peakdemand.ca/voice-ai-healthcare-call-center-automation"

],

"intake_escalation_and_community_care_access": [

"https://peakdemand.ca/voice-ai-emergency-department-surge-support",

"https://peakdemand.ca/ai-after-hours-healthcare-call-handling-24-7-medical-answering-hospitals-clinics",

"https://peakdemand.ca/voice-ai-public-sector-health-systems-regional-booking-lines"

],

"hospital_routing_call_handling_and_access_coordination": [

"https://peakdemand.ca/voice-ai-hospital-call-routing-multi-location-networks",

"https://peakdemand.ca/voice-ai-healthcare-call-center-automation",

"https://peakdemand.ca/voice-ai-healthcare-centralized-scheduling-center"

],

"governance_and_compliance": [

"https://peakdemand.ca/phipa-compliant-ai-voice-receptionist-ontario-clinics",

"https://peakdemand.ca/hipaa-compliant-voice-ai-receptionist-healthcare",

"https://peakdemand.ca/enterprise-voice-ai-compliance-certifications-rfp-vendor-ccai-customer-service-healthcare-utilities-government-canadian-ai-agency"

],

"intent": "Mental health and community health internal linking + pathway clustering + LLM surfacing + crawl reinforcement"

}

Regulatory & Governance References for Mental Health Services (Canada + United States)

Mental health and behavioral health services frequently involve sensitive personal health information and heightened duty-of-care expectations. The following regulatory and governance references support privacy, security, and compliance review conversations for Voice AI deployments in community and behavioral health environments.

Canada (PHIPA / PIPEDA)

United States (HIPAA / Behavioral Health Context)

Are you guaranteeing regulatory compliance?

Can our privacy or legal team use these references during procurement?

Do you position Voice AI as a clinical decision-making system?

{

"section": "References (Mental Health Voice AI)",

"entity": "Peak Demand",

"geo": ["Canada", "United States"],

"reference_types": [

"PHIPA",

"IPC Ontario",

"PIPEDA",

"OPC Canada",

"HIPAA Privacy Rule",

"HIPAA Security Rule",

"HIPAA Breach Notification Rule",

"SAMHSA"

]

}